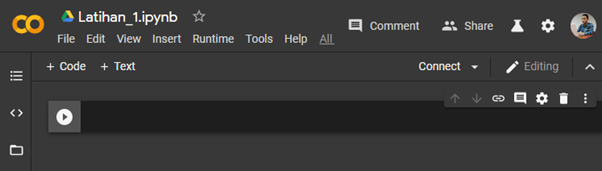

Collaboratory is a Jupyter notebook environment that really doesn’t require any configuration and runs entirely in the Google cloud. Setting up google-drive-ocamlfuse: import getpass Collaboratory is a Google incubation project created for collaboration (as the name suggests), training and research related to Machine Learning.

Import files google collaboratory install#

!apt-get -y install -qq google-drive-ocamlfuse fuseĪuthenticate and get credentials: from lab import authįrom oauth2client.client import GoogleCredentialsĬreds = GoogleCredentials.get_application_default() !add-apt-repository -y ppa:alessandro-strada/ppa 2>&1 > /dev/null Installing google-drive-ocamlfuse: !apt-get install -y -qq software-properties-common python-software-properties module-init-tools Mount google drive using google-drive-ocamlfuseĪnother possible option is to use google drive to store your data and mount your google drive on the machine using google-drive-ocamlfuse. Possible reasons for the upload to be so slow.

Import files google collaboratory code#

You can upload files from your computer easily with drag-and-drop or with code as follows: codefrom. Upload was super slow (Took me more than 2 minutes to upload 30 MBs) and no it wasn't my internet. Google Colab offers many ways to import your data: 1.

But in my experience till now, this is the worst way since the This creates a button using which you can select the files you want to upload. Google Colaboratory provided a python module lab with some utility tools, one of which is transfering files from/to your local system. The Google Colab machines are built on Debian based linux, therefore the simplest way for downloading data is wget or your faviorite tool for Now go to your Kaggle account and create new API token from my account section, a kaggle.json file will be downloaded in your PC. While building a Deep Learning model, the first task is to import datasets online and this task proves to be very hectic sometimes. So, I compiled a list of ways to import data to Google Colab: Using wget Importing Kaggle dataset into google colaboratory. Since the intances are created on the fly, the data gets deleted once the notebook is closed, so we need to keep on transferring it again and again and there isn't a simple way to transfer data unless you have your data hosted somewhere where you can use something like wget.

But one of the major issues I faced was importing data to the notebook. And because of all these reason, I have been writing all my python code on Google Colab for the last couple of weeks. Over all of this, one of the major selling points is that they are giving free computation on GPUs for upto 12 hours in a single session. The notebooks are stored on Google Drive, so it can be easily shared. Google Colaboratory is a Jupyter Notebook enviornment that doesn't require any setup and completely runs in the cloud.